Introduction

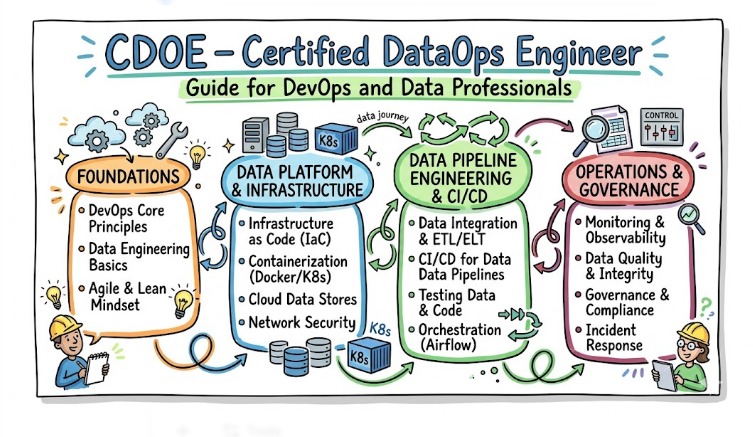

CDOE – Certified DataOps Engineer is a career-focused certification that helps professionals understand how to manage and automate data pipelines in modern cloud environments. It combines principles of DevOps, data engineering, and site reliability engineering to create a unified approach to data operations. In today’s digital ecosystem, businesses depend heavily on fast and accurate data for decision-making. This certification teaches how to ensure data reliability, scalability, and automation across complex systems. It is suitable for engineers, analysts, and architects who want to build strong expertise in DataOps and move into advanced engineering roles.

What is the CDOE – Certified DataOps Engineer?

The CDOE – Certified DataOps Engineer is a set of enterprise-grade standards for managing the technical complexity of modern data stacks. It represents the application of factory-floor automation to the digital world of data pipelines. This certification exists to ensure that as data sources and consumers multiply, the infrastructure remains manageable and cost-effective. It focuses on the architectural rigor required to support thousands of concurrent data jobs while maintaining strict quality control and high availability.

Who Should Pursue CDOE – Certified DataOps Engineer?

This path is critical for senior engineers, data architects, and infrastructure leads responsible for enterprise-wide data platforms. It is also highly relevant for technical managers in India and the global market who must oversee the transition from startup-style data projects to mature enterprise operations. Organizations that handle massive datasets—such as those in finance, healthcare, and e-commerce—require professionals who can implement these scaling standards. Whether you are managing a small team or a global department, these skills provide the blueprint for sustainable growth.

Why CDOE – Certified DataOps Engineer is Valuable and Beyond

The value of this certification lies in its focus on “Architectural Scalability.” In the coming years, the sheer volume of data generated by AI and IoT will break traditional management methods. CDOE standards provide the only viable way to stay relevant by teaching engineers how to automate the boring, repetitive parts of scaling. Holding this credential proves you can handle “Enterprise-at-scale” problems, making you an essential leader for any organization’s long-term digital strategy. It ensures that your career path moves toward high-level architectural design and strategic oversight.

CDOE – Certified DataOps Engineer Certification Overview

The certification is delivered by DataOps School and is hosted on their official Website. The program focuses on “Design Patterns for Scale,” teaching students how to build modular, reusable components for data pipelines. The assessment requires candidates to demonstrate how they would scale a bottlenecked system without increasing technical debt. By passing this certification, a professional proves they can navigate the complex trade-offs between performance, cost, and reliability that occur when data systems reach enterprise levels.

CDOE – Certified DataOps Engineer Certification Tracks & Levels

The curriculum is structured into Foundation, Professional, and Advanced levels, each focusing on different scales of operation. The Foundation level covers the basic principles of modular design. The Professional level dives into technical orchestration and performance tuning at scale. The Advanced level is dedicated to global, multi-region architecture and federated data governance. This allows engineers to master the art of scaling incrementally, ensuring they have the foundational knowledge before tackling the most complex global systems.

Complete CDOE – Certified DataOps Engineer Certification Table

| Track | Level | Who it’s for | Prerequisites | Skills Covered | Recommended Order |

| Scaling Core | Foundation | All Engineers | Basic IT Knowledge | Modular Design, Lean Scaling | 1 |

| System Engineering | Professional | Data Leads, SREs | Foundation Cert | Parallel Processing, Tuning | 2 |

| Global Architecture | Advanced | Architects, Leads | Professional Cert | Multi-region, Federation | 3 |

| Infrastructure/Ops | Specialist | Platform Engineers | Foundation Cert | Kubernetes for Data, IaC | Optional |

Detailed Guide for Each CDOE – Certified DataOps Engineer Certification

What it is

This certification validates an engineer’s understanding of how modularity leads to scalability. It proves they can design basic data workflows that are easy to replicate and expand.

Who should take it

It is ideal for engineers who are beginning to work on systems that are outgrowing their current manual processes.

Skills you’ll gain

- Understanding the concept of “Data as Code” for scalable infrastructure.

- Ability to design reusable pipeline templates.

- Knowledge of how to identify scaling bottlenecks in a data value stream.

- Basic understanding of cloud-native scaling patterns.

Real-world projects you should be able to do after it

- Refactoring a monolithic data script into a modular, reusable format.

- Creating a standardized environment for data experimentation.

- Designing a basic monitoring dashboard for pipeline throughput.

Preparation plan

- 7–14 Days: Focus on the principles of modularity and lean engineering basics.

- 30 Days: Practice refactoring small scripts into modular components.

- 60 Days: Complete the official study guide and take the foundational scaling quiz.

Common mistakes

- Trying to scale a “messy” manual process rather than cleaning it first.

- Over-engineering simple pipelines before actual scale is required.

Best next certification after this

- CDOE – Professional level.

Choose Your Learning Path

DevOps Path

Engineers on this path focus on the automation of the scaling process itself. They use Infrastructure as Code to ensure that as data demands increase, the underlying servers and storage scale automatically. The goal is to make scaling a “non-event” that happens silently in the background.

DevSecOps Path

This path ensures that security scales alongside data. These professionals build automated security controls that can handle thousands of concurrent pipelines without slowing down delivery. They ensure that “more data” does not mean “more risk” for the enterprise.

SRE Path

The SRE path focuses on the performance and reliability of the data platform at high loads. They gain technical skills in load testing and stress testing data pipelines to find the breaking points. They treat scaling as a performance engineering problem that can be solved with code.

AIOps / MLOps Path

This path addresses the unique scaling challenges of machine learning data. Engineers learn how to scale the data feeding training clusters and manage the high-speed requirements of real-time inference. It ensures that AI initiatives can grow from small pilots to global production systems.

DataOps Path

The primary path is for those who want to be the architects of the enterprise data factory. They focus on the end-to-end orchestration that allows data to flow from thousands of sources to thousands of consumers. They are the guardians of the organization’s scalable data culture.

FinOps Path

As scaling often leads to higher cloud bills, this path focuses on “Cost-Efficient Scaling.” Engineers learn how to scale resources vertically and horizontally while maintaining the lowest possible cost. They ensure that enterprise growth remains financially sustainable.

Role → Recommended Certifications

| Role | Recommended Certifications |

| DevOps Engineer | CDOE Foundation, CDOE Professional |

| SRE | CDOE Foundation, Certified Site Reliability Engineer – Foundation |

| Platform Engineer | CDOE Professional, CDOE Advanced |

| Cloud Engineer | CDOE Foundation, Professional |

| Security Engineer | CDOE Foundation, DevSecOps Track |

| Data Engineer | CDOE Foundation, Professional, Advanced |

| FinOps Practitioner | CDOE Foundation, FinOps Track |

| Engineering Manager | CDOE Foundation, Leadership Track |

Next Certifications to Take After CDOE – Certified DataOps Engineer

Same Track Progression

For those looking to manage global-scale data platforms, the Advanced CDOE level is the ultimate step. This covers federated data governance and multi-region synchronization. It prepares you for roles such as Principal Architect or Global Head of Data Infrastructure.

Cross-Track Expansion

Many architects choose to move into specialized cloud engineering or advanced SRE tracks. Combining CDOE with a Professional Cloud Architect or Kubernetes Administrator certification creates a profile that can scale both the data and the platform.

Leadership & Management Track

As you move into strategic roles, the focus shifts to organizational scaling and technical governance. You will learn how to design the policies and team structures that allow an entire enterprise to grow. This track is essential for moving into CTO or VP of Engineering positions.

Training & Certification Support Providers for CDOE

DevOpsSchool

DevOpsSchool provides a robust curriculum focused on the enterprise-scale automation of data systems. They offer hands-on labs that simulate massive data loads, helping engineers understand how to tune pipelines for high performance. Their training is designed for professionals who need to solve real-world scaling issues today.

Cotocus

Cotocus offers advanced technical training that focuses on the architectural design of large-scale platforms. Their sessions are geared toward senior leads who need to master the art of parallel processing and high-availability data. They provide the deep technical knowledge required for the Advanced scaling exam.

Scmgalaxy

Scmgalaxy is a leading resource for community-driven learning and technical documentation. They provide a wide variety of tutorials on how to use version control and CI/CD to manage large-scale data environments. Their focus on the “how-to” of automation is highly beneficial for scaling projects.

BestDevOps

BestDevOps focuses on providing clean, curated training for high-impact engineering roles. Their modules are designed to help professionals master the scaling tools used in modern enterprises quickly. They offer an excellent balance between architectural theory and practical implementation.

Devsecopsschool

Devsecopsschool is the premier institution for security-integrated engineering at scale. They teach how to build security guardrails that scale automatically with your data pipelines. Their curriculum ensures that compliance remains a silent, automated part of your growth strategy.

Sreschool

Sreschool focuses on the reliability and performance of platforms at the enterprise level. They help engineers apply software engineering discipline to find and fix scaling bottlenecks. Their training is essential for ensuring that your growth does not lead to platform instability.

Aiopsschool

Aiopsschool provides training for managing the massive data requirements of enterprise-level AI. They focus on the unique scaling challenges found in machine learning and real-time analytics. This curriculum is vital for engineers working in data-heavy, AI-first companies.

Dataopsschool is the official provider and primary authority for the CDOE – Certified DataOps Engineer standards. They offer the most direct path to certification with official guides that focus on “Engineering for Scale.” Learning from the source ensures you master the standards correctly.

Finopsschool

Finopsschool teaches the skills needed to scale data operations without breaking the bank. They show engineers how to use cloud unit economics to keep growth cost-effective. This training is essential for any enterprise looking to scale its data platform sustainably.

Frequently Asked Questions (General)

- How does CDOE help with scaling?It provides standardized patterns for modularity and automation, allowing you to handle more data with the same size team.

- Is this only for companies with Petabytes of data?No, these standards are beneficial for any organization that is moving from manual processes to automated growth.

- What is “Modular Design” in DataOps?It is the practice of building small, reusable pipeline components that can be easily plugged together and scaled independently.

- Does the exam test my coding ability?The Professional and Advanced levels test your ability to design and optimize code-driven workflows for high performance.

- How long does it take for an architect to prepare?An experienced architect can typically prepare for the Professional scaling track in 3 to 4 months.

- What is “Federated Governance”?It is a technical strategy for allowing different departments to manage their own data while following a central set of rules.

- Is this certification valuable in India?Yes, it is highly sought after by major Indian tech giants and multinational firms managing global data platforms.

- How does this relate to “Parallel Processing”?The curriculum covers the technical patterns needed to split data tasks across multiple servers to increase speed.

- Can I use these standards on legacy systems?Yes, one of the core parts of the training is learning how to wrap legacy systems in modern DataOps standards for better scaling.

- Does the certification involve load testing?Yes, the Professional level focuses heavily on understanding performance limits and how to overcome them through technical tuning.

- How do I renew my certified scaling status?Renewal typically happens every two years through continuing education or by passing a higher-level assessment.

- Is there a focus on cost-aware scaling?Yes, the FinOps specialist track and general curriculum focus on scaling efficiently to avoid cloud bill shocks.

FAQs on CDOE – Certified DataOps Engineer

- How does the curriculum handle “Data Skew” at scale?It teaches technical patterns for redistributing data loads evenly across a cluster to prevent performance bottlenecks.

- What role does Kubernetes play in scaling?The curriculum focuses on using container orchestration to dynamically scale data workers based on the current workload.

- Will I learn about high-availability data lakes?Yes, the Advanced level covers the design of data storage that can withstand multiple server or region failures.

- How are “Scaling SLOs” defined?They are usually measured by metrics like “Throughput” (how much data is processed per hour) and “Resource Efficiency.”

- Does the training cover real-time streaming at scale?Yes, it includes patterns for managing the scalability of platforms like Kafka and Spark Streaming.

- How does CDOE address technical debt during growth?By emphasizing modularity and automated testing, it prevents the “quick fixes” that usually lead to debt as systems scale.

- Is there a focus on multi-cloud scaling?Yes, the Advanced level teaches how to scale data operations across different cloud providers simultaneously.

- Is this more about “Design” or “Implementation”?It is a balanced approach that teaches you how to design the architecture and then implement the automation to run it.

Conclusion

From my perspective as a principal engineer, scaling is the hardest problem to solve in the data world. Most projects fail because they cannot handle the jump from a small pilot to an enterprise system. The CDOE – Certified DataOps Engineer is specifically designed to prevent this failure. It is one of the few certifications that teaches you how to think like a factory engineer for data. By mastering these scaling standards, you move from being a “troubleshooter” to an “architect.” This is a massive leap in career value. If you want to be the person who can lead a global organization through its data growth journey, this is the path you need to take. Start with the foundation, master the modularity labs, and build a career that grows as fast as the data itself.